Cohen's Kappa and Fleiss' Kappa— How to Measure the Agreement Between Raters | by Audhi Aprilliant | Medium

KappaSC: A Measure of Agreement on a Single Rating Category for a Single Item or Object Rated by Multiple Raters

Inter-and intra-rater agreement for a single item of the Mini-Balance... | Download Scientific Diagram

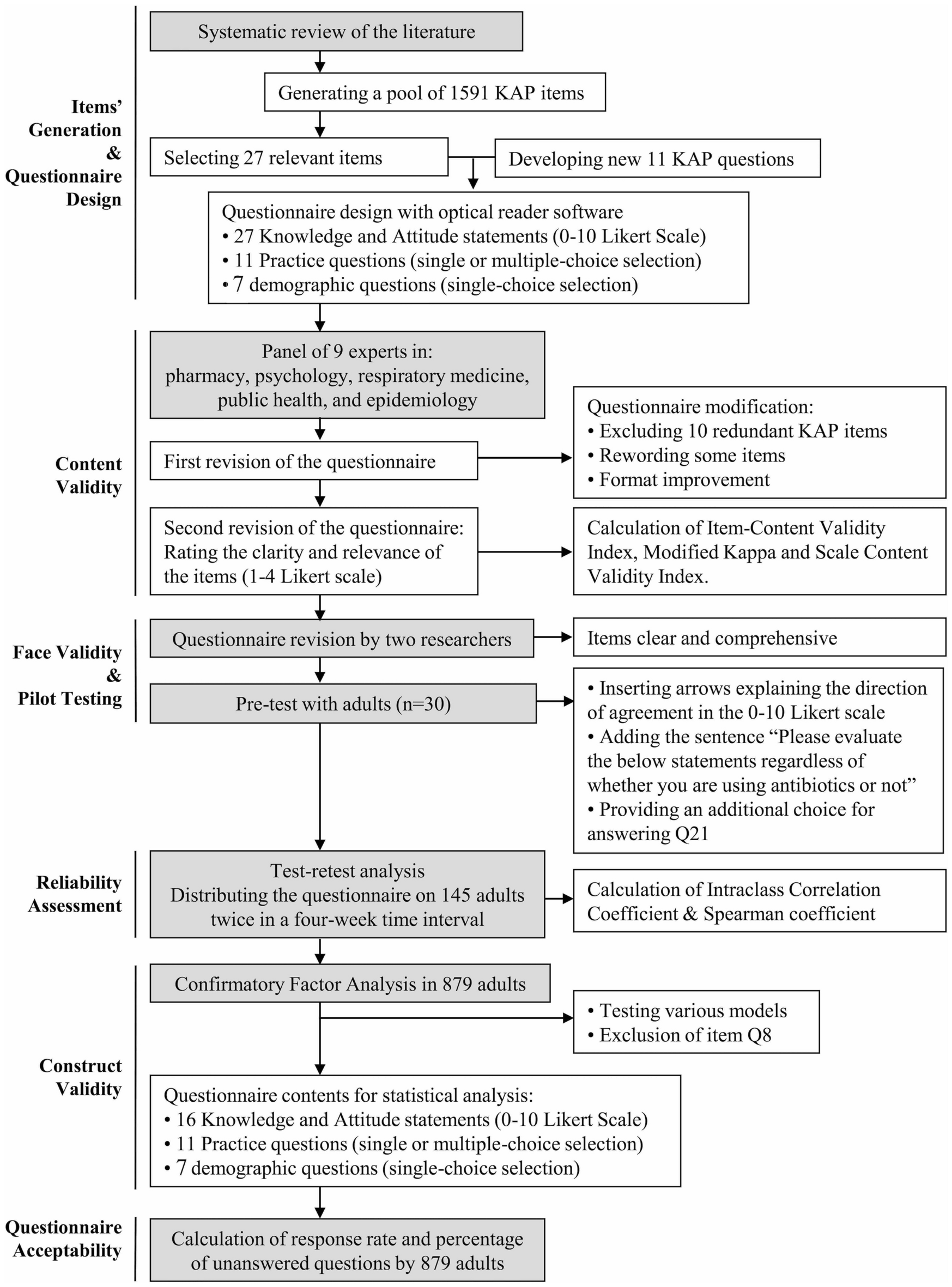

Design, reliability and construct validity of a Knowledge, Attitude and Practice questionnaire on personal use of antibiotics in Spain | Scientific Reports